Kiam: Iterating for Security and Reliability

Kiam bridges Kubernetes’ Pods with Amazon’s Identity and Access Management (IAM). It makes it easy to assign short-lived AWS security credentials to your application.

We created Kiam in 2017 to quickly address correctness issues we had running kube2iam in our production clusters. We’ve made a number of changes to it’s original design to make it more secure, reliable and easier to operate. This article covers a little of the story that led to us creating Kiam and more about what makes it novel.

Background and Rationale for Kiam

I suspect like many users of Kubernetes we are in the process of moving lots of small application teams from independently running their own almost identically shaped AWS infrastructure to centrally operated, soft multi-tenant clusters. “Evolving our Infrastructure”, written by my colleague Tom Booth, covers the rationale for this in more detail and is also worth a read.

When we were experimenting and planning our deployment in late 2016 we knew we needed a way to integrate our new Kubernetes applications with Amazon Identity and Access Management (IAM) to ensure we could use per-application credentials with IAM Roles. Fortunately there was already a project that provided precisely this integration: kube2iam.

We created our initial clusters, deployed kube2iam, and started to help teams migrate. Teams initially adapted and deployed their applications with the help Cloud team, the team responsible for operating the Kubernetes clusters, but after a while, as familiarity and confidence grew, teams were increasingly able to move on their own.

Over the next few months more teams migrated their applications. One morning, a few months after the first teams had moved onto the clusters, the Cloud team had reports that applications were broken: AWS operations, like fetching an S3 object, were being denied. Applications were permitted to perform the operations and had previously worked, but were now failing sporadically.

Examining the logs revealed applications were using incorrect credentials: the proxy was issuing another role’s credentials to the application. We found the issue reported on kube2iam’s issue tracker and thought about what we could do.

By this point a few teams had already been running their applications for a couple of months on the new clusters and no longer had older environments they could easily switch back to. The nature of the bug was nondeterministic: credentials would issue correctly for a while and other times they wouldn’t. Restarting pods sometimes fixed it but it wasn’t reliable. Cluster load continued to climb and ever more teams reported errors.

After a somewhat fraught day investigating we advised teams to use per-application long-lived credentials with the relevant IAM policy for a few days while we fixed the problem. Credentials were stored as Kubernetes secrets and mounted via the perennial AWS_XXX environment variables, buying us a little time to fix properly.

Given the time pressure we decided it would be faster to write a small replacement for kube2iam: we only needed a subset of what kube2iam supported and realised that the Kubernetes’ Go client library already had robust implementations of any data structures we’d need. If we cut down everything we needed to do it’d be simpler than trying to adapt an existing codebase and we could be purely selfish in our decision making: focus on the problem rather than maintaining other features we didn’t need.

We had our first version deployed within a day or two and, after a few bumpy events from client applications failing to retry failed credential operations, everything was stable again for a while. Applications were being issued the correct credentials. We took a breath, removed the temporary long-lived credentials and moved on to something else.

After a few more months however, and as cluster workloads continued to increase, we started getting a few more reports of errors. We reviewed the logs and metric data to hypothesise what the cause might be. All data pointed to contention around Kiam handling large batches of state updates, back-pressure being applied that slowed down the consumption of state updates to the caches.

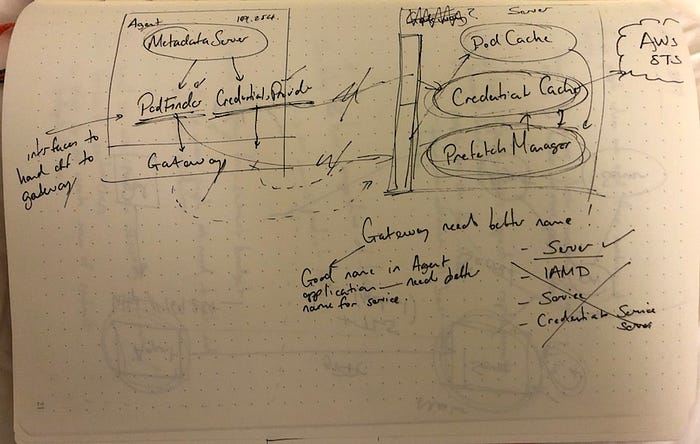

We believed we could address these errors, and deliver some other significant improvements, through some larger design changes to Kiam. I spoke to people, drew lots of pictures and thought about it while traveling.

Kiam Improvements

Kiam now offers:

- Increased security by splitting the process into two: an agent and server. Only the server process needs to be permitted to perform

sts:AssumeRole. Cluster operators can place user workloads on nodes with only essential IAM policy necessary for kubelet. This guards against privilege escalation from an application compromise. - Prefetching credentials from AWS. This reduces response times as observed by SDK clients which, given very restrictive default timeouts, would otherwise cause clients to fail.

- Load-balancing requests across multiple servers. This helps deploy updates without breaking agents. We observed SDK behaviour in production where applications would fail as soon as the proxy was restarted even when applications held valid, unexpired credentials. It also protects against errors interacting with an individual server.

These changes are the most significant parts where Kiam deviates from kube2iam. Most are also largely a result/benefit of separating Kiam into two processes: server and agent. I’ll now go through each in a little more detail.

Agents and Servers

Kiam originally used exactly the same model as Kube2iam: a process deployed onto each machine via a DaemonSet. It was simple to deploy and reason about, and allowed us to quickly fix the data race issue that caused outages for our production cluster users.

This worked for a few months but, as the number of running Pods increased and, along with it, the number of pods requiring AWS credentials, we started to hit other client errors:

NoCredentialProviders: no valid providers in chainThese errors were caused by clients requesting credentials through the Kiam proxy and was either unable to identify the Pod either because 1) it hadn’t yet added the pod data to it’s cache or 2) was unable to fetch credentials from AWS quickly enough.

Before exploring more about how Kiam’s design was updated to reduce the likelihood of these errors it’s worth covering a little more about the AWS SDKs.

AWS SDKs and credentials

AWS SDK clients generally support a number of different providers that can fetch credentials to be used for subsequent API operations. These are composed together into a provider chain: providers are called in sequence until one is able to provide credentials.

In the early days of AWS and EC2 most users would’ve been familiar with doing something like:

$ export AWS_ACCESS_KEY_ID=AKXXXXXX

$ export AWS_SECRET_ACCESS_KEY=XXXXXXXXXXXXXX

$ aws ec2 ...This works with one of the SDK’s credential providers: keys are read from environment variables to be presented to AWS during API calls.

One other credential provider is the Instance Metadata API: http://169.254.169.254 on an EC2 instance. This metadata API is what both kube2iam and Kiam proxy to provide seamless IAM integration for clients using AWS SDKs on Kubernetes; requests for credentials are processed by the proxies, everything else is forwarded to AWS.

Within the Java SDK the base credentials endpoint provider, which the instance credentials provider extends, has a default retry policy of CredentialsEndpointRetryPolicy.NO_RETRY. If a client experiences an error interacting with the metadata api it won’t be retried by the SDK and instead must be handled by the calling application.

This is problematic for systems like Kube2iam and Kiam that intercept and proxy the Instance Metadata API: when a client process attempts to request credentials via InstanceProfileCredentialsProvider they’re not talking directly to AWS but the HTTP proxy and there’s more reasons they may fail to respond successfully than Amazon’s own API. Further, the EC2CredentialsUtils package used by InstanceMetadataCredentialsEndpointProvider also specifies no retries.

It’s not just errors/failures that would cause the client to fail: there’s also timeouts specified within ConnectionUtils that are used within the SDK:

- Connect Timeout: 2 seconds

- Read Timeout: 5 seconds

Those timeouts may seem generous but remember that should either of them be exceeded, or a failure response is returned, the credential providers are not configured to retry. Credentials are obtained from Simple Token Service which, in our experience, can be amongst the slower APIs and can approach the timeouts I mention above.

Timeouts and lack of retries within the SDK clients force Kiam to do a lot to avoid transferring a fault to a calling application and causing an error. Given the high degree of fan-in to a service like Kiam this could cause a significant impact to a cluster’s applications.

AssumeRole on Every Node?!

Kiam’s original DaemonSet model was a proxy on each node and thus every node would need IAM policy to permit sts:AssumeRole for all roles used on the cluster.

We use per-application IAM roles to ensure that application processes only have access into AWS that they need. With every node able to sts:AssumeRole any role the end result is every node can still access any role and thus the union of AWS APIs used by the different application policies.

Although we could start associating subsets of nodes to groups of roles (per-team, for example) we didn’t want to have to get into managing different groups of nodes to improve our IAM security.

What makes Kiam novel

I’d now like to explain a little more about how Kiam’s novel design mitigates these issues.

The necessity of Prefetching

Kiam uses Kubernetes’ client-go cache package to create a process which uses two mechanisms (via the ListerWatcher interface) for tracking pods:

Watcher: the client tells the API server which resources it’s interested in tracking and the server will stream updates as they’re available. Think of these as deltas to some state.Lister: this performs a (relatively expensive) List which retrieves details about all running pods. It takes longer to return but ensures you pick up details about all running pods, not just a delta.

As Kiam becomes aware of Pods they’re stored in a cache and indexed using the client libraries’ Indexers type. Kiam uses an index to be able to identify pods from their IP address: when an SDK client connects to Kiam’s HTTP proxy it uses the client’s IP address to identify the Pod in this cache.

It’s important then that when an SDK client attempts to connect to Kiam the Pod cache is filled with the details for the running Pod. Based on the Java client code we saw above Kiam has up to 5 seconds to respond with the configured role and so, by extension, Kiam has 5 seconds to track a running Pod.

If Kiam can’t find the Pod details in the cache it’s possible the details from the watcher haven’t yet been delivered (but may eventually be). Inside the agent we include some retry and backoff behaviour that will keep checking for the pod details in the cache up until the SDK client disconnects. Ideally the pod details will either be filled by the watcher or lister processes within time.

Kiam’s retries and backoffs use the Context package to propagate cancellation from the incoming HTTP request down through the chain of child calls that Kiam makes. This cancellable context lets us wait as long as possible for the operations to succeed and has been hugely helpful for writing a system that honours timeouts and retries.

Credential prefetching

Alongside maintaining the Pod cache the other responsibility of the Server process is to maintain a cache of AWS credentials retrieved from calling sts:AssumeRole on behalf of the runnings pods.

Originally, to keep things simple and obvious, Kiam used to request credentials upon request. When a client connected we would make a request to AWS in-band, store the fetched credentials in a cache and then keep refreshing them as long as the Pod was still running. But, as we saw above, the expectations from AWS SDK clients is that the metadata API returns very quickly. Kiam and Kube2iam both use Amazon STS to retrieve credentials which is quite a bit slower than the metadata API.

We have metrics tracking the completion times for the sts:AssumeRole operation and generally it’s very stable: typical 99th percentile times of 550 milliseconds. Every now and then though it goes beyond that. Below is a plot from our monitoring showing an increase in both max (yellow) and 95th percentile (blue) to well over a few seconds. Although this was a few months ago this isn’t atypical (it’s just taken me a long time to write this article :).

It’s quite normal for such spikes to happen but it’s problematic if we hit slow responses from AWS when fetching credentials in-band: a slow response from STS would cause us to propagate a failure to our SDK clients given their strict retry and timeout policies. To mitigate this Kiam prefetches credentials.

When a Pod is tracked through an update from a Kubernetes API watcher or from a full sync it’s added to a buffered channel with the prefetcher on the other side. The prefetcher requests credentials and stores them in the credentials cache ahead of the client requesting them.

Prefetching is an optimisation: if a pod requests credentials for a role and the credentials cache doesn’t already have them they’ll be requested again. We also use a Future to wrap around the AssumeRole operations within the cache to avoid requesting credentials for the same role repeatedly while waiting for credentials to be issued.

Prefetching helps us to smooth out interactions with the STS API and return responses to clients far quicker. To see just how much of a difference there’s another plot a little later that covers the same period as above.

Reduced AssumeRole API growth

Prefetching was one of the drivers for why we chose to split Kiam into server and agent processes: it would’ve been too costly for us, in terms of time and volume of AWS API calls, to do when running as a homogenous process where each process on each node would request credentials for all roles.

In the original homogenous model the number of sts:AssumeRole API calls would grow as Nodes * Roles: adding an additional node or role results in more than 1 additional call and, on a large dynamic cluster, this could be quite significant. Historically we’d also seen our STS operation durations suffer when we hammered it.

One solution is for each node to only requests credentials that it’s currently using. If the proxy process restarted (because of an upgrade, failure etc.) it would also lose all credentials and refilling the caches could be slow. We’d observed previously that SDK clients had immediately raised exceptions in such a situation- causing clients to error.

Kiam’s novel Agent/Server separation causes sts:AssumeRole calls to grow as Servers * Roles but given Servers are normally pinned to a subset of nodes, with a near constant number of replicas, API growth can be simplified to linear with regards to Roles.

Fetching credentials out-of-band before a client requests them reduces the impact of a slow AWS response. Running multiple servers that fetch the same credentials provides a degree of resilience to an individual server failure to track a pod/fetch credentials: the agent can take credentials from the first server that responds successfully and can retry the operation safely across servers.

Performance Summary

Running multiple servers with redundant caches and prefetching credentials improves the chances the Kiam HTTP proxy performs within the expectations of the AWS SDK client.

Earlier I showed a plot highlighting a period of severely slow responses from the STS API that would cause any SDK client with default timeouts to fail. The plot below covers the same period as before but shows the Kiam agent’s response times. The max (yellow) is just over 1 second (when AWS’ response was over 10 seconds) and the 95th percentile (blue) time is closer to 47ms. Both are well within the limits of the SDK clients.

Increased Security

As mentioned earlier, Kiam and Kube2iam both followed the same deployment model at the beginning: a DaemonSet that installed itself via iptables on every node in the cluster. Such a deployment requires all nodes running user pods to have IAM policy attached that permit the sts:AssumeRole call. Having all nodes able to assume any role though is problematic in the event of a node being exploited: a host exploit would open access to any role. This is especially undesirable when we want, as far as possible, to run soft multi-tenant, multi-environment clusters.

Carving out a separate Server process meant we could run the server processes on a subset of machines and only they would need sts:AssumeRole. Nodes running user workloads would only require IAM permissions needed for kubelet and nodes running the server processes wouldn’t be permitted to run any user processes.

gRPC communications between the agents and servers are protected with TLS and mutual verification to allow only the agent, and the server’s health checker, to interact with the server.

Deploying updates

When kiam was still deployed as a single-process DaemonSet we had problems rolling out updates.

Pods would, via their SDK client, have fetched temporary credentials successfully but, because they’re session-based credentials, would also continually attempt to refresh them in the background. When this background refresh failed because the Kiam process was unavailable the SDK’s would immediately invalidate the credentials being used and throw errors.

Applications would immediately see their AWS operations fail despite having valid credentials. This problem hit us a number of times and caused a lot of pain for the various service teams that use the clusters.

Running separate Server and Agent processes made it easier for us to independently deploy changes. If a server is updated we can often deploy them without affecting the agent processes at all. If the agent needs to be updated, however, it can restart far quicker as it no longer needs any caches with credentials or pod data- any such requests are immediately forwarded to the server processes.

Summary

Kiam was originally written to let us quickly address data races causing failures in our production clusters but we ended up making some significant changes to it’s design to improve reliability and performance at our modest scale.

We’ve been running it since we made the repository public and it’s significantly reduced the number of IAM related issues reported.

Further improvements

There’s plenty of things I’d like to see done with Kiam to further improve its security further and the user experience. For example:

- Reporting IAM errors via Kubernetes’ Events API

- Tightening the permissions the Agent container needs to manipulate iptables rules

- Experimenting with the current load-balancing behaviour of the client to make concurrent, competing requests against servers and use the fastest valid response

- Deprecate the existing metric collection and replace with Prometheus exclusively

Most of these are in the project’s Issue tracker on GitHub. I’d be delighted to help people pick something up if they were interested.

If you need to contact me I’m on Twitter @pingles and GitHub @pingles. I’m also @pingles on the Kubernetes Slack.